Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters

![Prevalence-adjusted Bias-adjusted κ Values as Additional Indicators to Measure Observer Agreement [letter] | Radiology Prevalence-adjusted Bias-adjusted κ Values as Additional Indicators to Measure Observer Agreement [letter] | Radiology](https://pubs.rsna.org/cms/10.1148/radiol.2321031974/asset/images/medium/r04jl53t01x.jpeg)

Prevalence-adjusted Bias-adjusted κ Values as Additional Indicators to Measure Observer Agreement [letter] | Radiology

Success and time implications of SpO2 measurement through pulse oximetry among hospitalised children in rural Bangladesh: Variability by various device-, provider- and patient-related factors — JOGH

Diagnostic Uncertainty and the Epidemiology of Feline Foamy Virus in Pumas (Puma concolor) | Scientific Reports

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

Reliability of Echocardiographic Indicators of Pulmonary Vascular Disease in Preterm Infants at Risk for Bronchopulmonary Dyspla

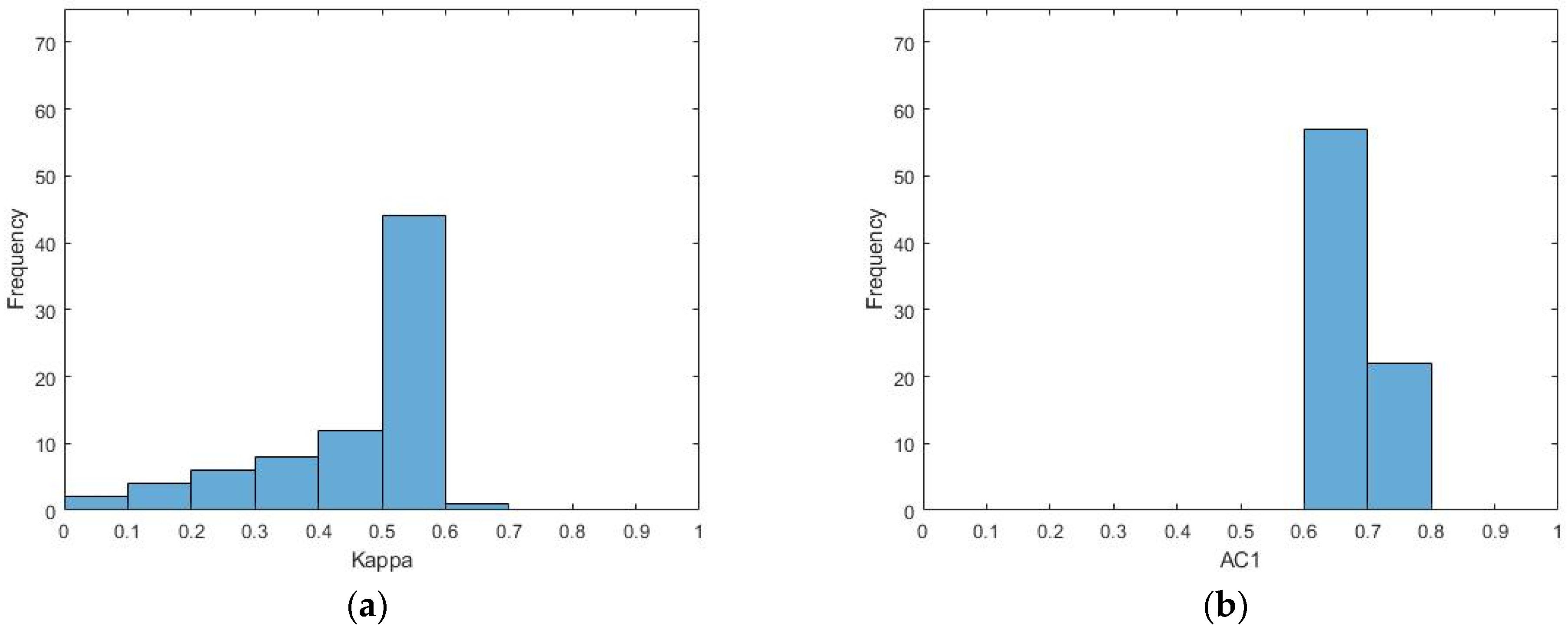

Prevalence-adjusted and bias-adjusted Kappa (PABAK) coefficients for... | Download Scientific Diagram

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

Intra-Rater and Inter-Rater Reliability of a Medical Record Abstraction Study on Transition of Care after Childhood Cancer | PLOS ONE

What does PABAK mean? - Definition of PABAK - PABAK stands for Prevalence-Adjusted Bias-Adjusted Kappa. By AcronymsAndSlang.com

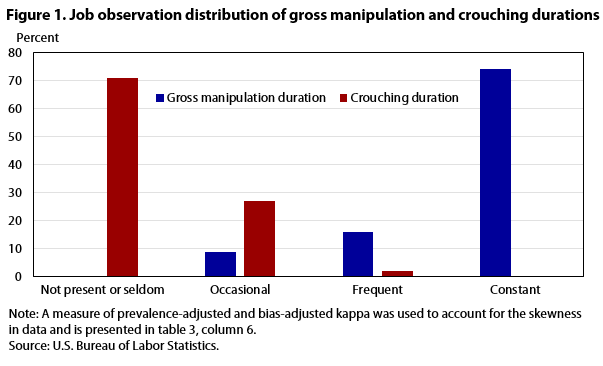

Occupational Requirements Survey: results from a job observation pilot test : Monthly Labor Review: U.S. Bureau of Labor Statistics

The disagreeable behaviour of the kappa statistic - Flight - 2015 - Pharmaceutical Statistics - Wiley Online Library

Validity of Actigraphy in Measurement of Sleep in Young Adults With Type 1 Diabetes | Journal of Clinical Sleep Medicine